The discussion relating to intellectual property applies both to humans and to generative models. Or rather, they apply to the companies that "create" such models. In this regard, there is a significant innovation in the world of digital image generation: the introduction of specific watermarks for images created via DALL-E3. This promises to make the boundary between artificially generated and human content not only more defined but also more transparent.

How to recognize images created by generative models? With the watermark on DALLE-E 3, for starters

OpenAI recently introduced a feature that could change the way we perceive digital content: a watermarking system for images generated by DALL-E 3. This update aims to establish a bridge between the increasing production of digital content and the need for greater transparency and reliability.

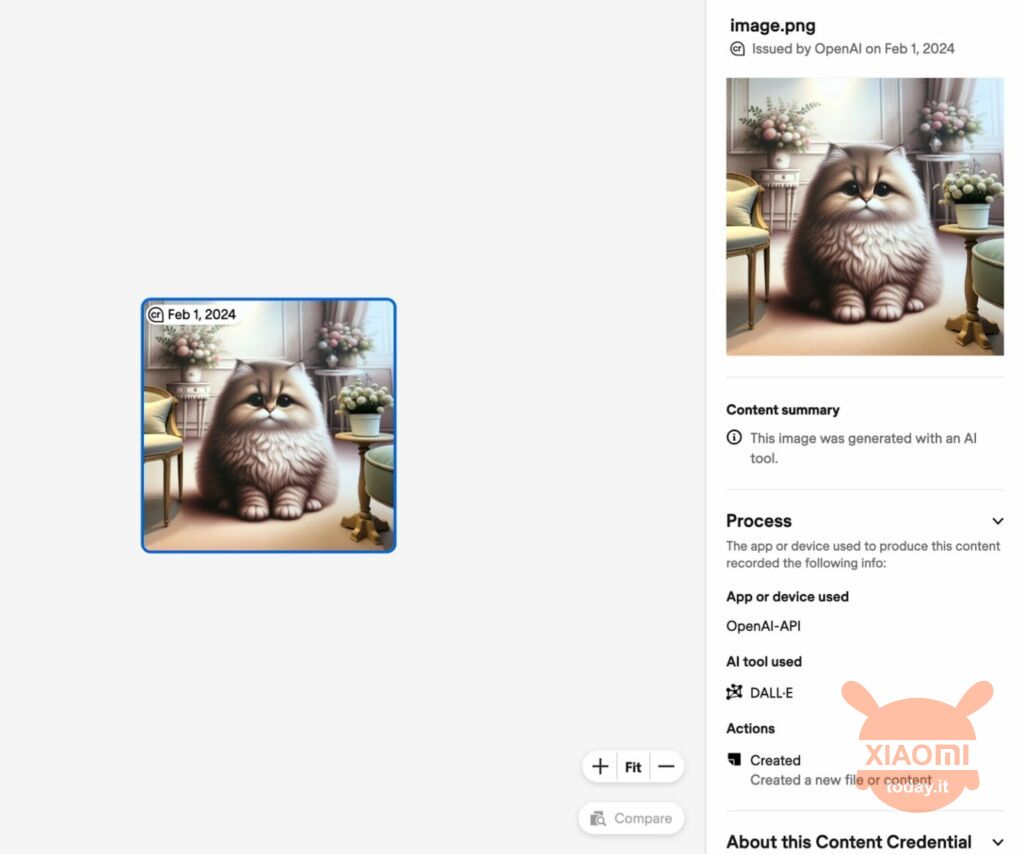

The watermark system implemented on DALL-E 3 is divided into two components: an invisible part integrated into the image metadata and a visible symbol, represented by the CR logo, discreetly positioned in the upper left corner of the image. The objective is twofold: on the one hand, facilitate identification of the origins of a digital image, allowing users to verify whether it was generated through artificial intelligence; on the other, keep the visual quality of the image unchanged, despite the addition of new elements.

Read also: DALL-E 3 is official: now for everyone on ChatGPT

This development fits into a broader context, supported by Coalition for Content Provenance and Authenticity, a consortium involving technology giants such as Adobe and Microsoft. These are committed to promote the authenticity of digital content through the Content Credentials watermark, thus helping to delineate a clear distinction between human creative work and that generated by AI.

Despite the enthusiasm for this innovation, significant challenges remain, mainly related to possibility that metadata may be removed, voluntarily or otherwise, when sharing on social media or other digital platforms. This vulnerability highlights the complexity of fighting disinformation and the need for effective strategies for verifying digital content.