Wearables are one of those products that in the near future will have to integrate increasingly cutting-edge technologies. It is no secret that soon will replace smartphones. The fact that some are integrating YouTube 100% is already a clue. But the Cornell university wants to take two steps forward, not one, with EarlO. These are the first headphones that, thanks to a sonar, read the user's facial expressions. Let's see the details.

Although it is a prototype, the EarlO headphones studied by Cornell University, read facial expressions thanks to a sonar

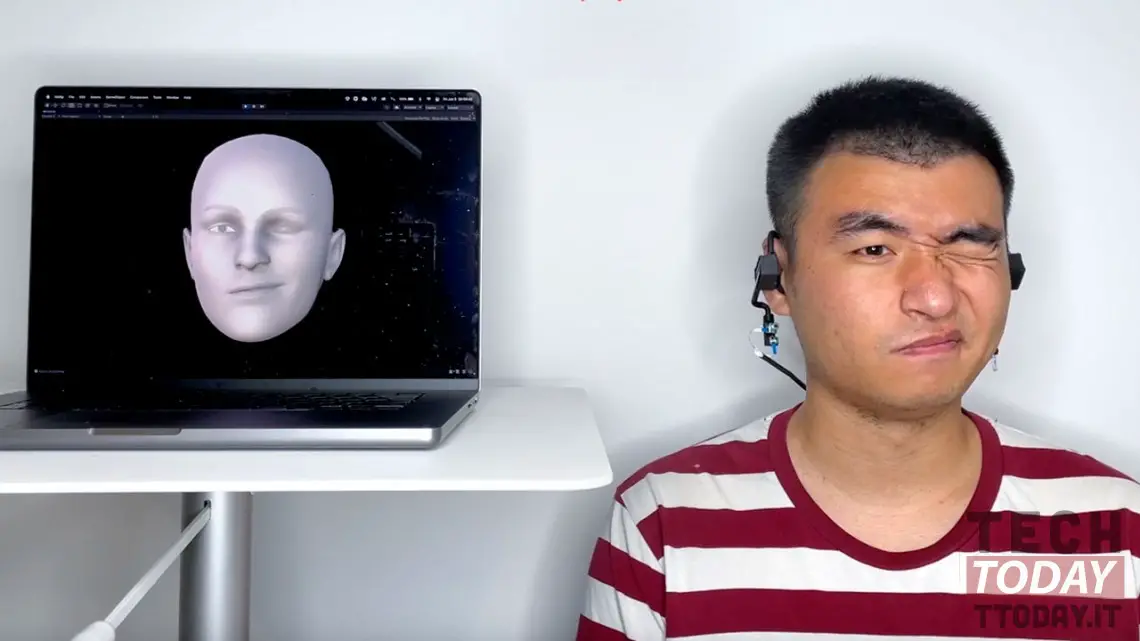

The engineers of the Cornell University they developed headphones with sonar calls EarIO. They can read the owner's facial expressions and create a dynamic avatar in his image. Such a system it requires much less energy and computer resources compared to conventional cameras.

The sonar on the external speakers of the headphones reflects the sound of the user's cheeks, the microphone captures the dynamics of the echo and the deep learning algorithm, based on this data, creates a avatar three-dimensional. According to the developers, EarIO can broadcast facial movements to a mobile device in real time, and an avatar can be used in video calls.

Read also: vivo TWS Air: the brand presents the new ultra-light and economical headphones

Cheng Zhang, principal investigator at theIntelligent Computer Interfaces for Future Interactions Lab, he has declared:

Such camera-powered devices are large, heavy, and energy-intensive, which is a big deal for wearable gadgets. They also collect a lot of personal information. With audio, we get around these problems

However, the device it only lasts three hours without charging, despite being much more energy efficient than cameras. The team hopes to get around this problem as well and also wants to make EarIO a plug-and-play device: It currently takes 32 minutes for the system to recognize your face before first use.